As part of a series on expertise and COVID-19, the Expertise Under Pressure team asked Matt Bennett, currently a lecturer in Philosophy at the University of Cambridge, to write a piece based on his new article “Should I do as I’m told? Trust, Experts, and COVID-19” forthcoming in the Kennedy Institute of Ethics Journal.

Trusting the science

Radical public health responses to the pandemic around the world have asked us to make unprecedented changes to our daily lives. Social distancing measures require compliance with recommendations, instructions, and legal orders that come with undeniable sacrifices for almost all of us (though these sacrifices are far from equally distributed). These extreme public measures depend for their success on public trust.

Trust in these measures is both a necessary and desirable feature of almost all of the public health strategies currently in place. Necessary, because it seems fair to assume that such extreme measures cannot be effectively introduced, much less maintained, solely through policing or other forms of direct state coercion. These measures require a significant degree of voluntary compliance if they are to work. And desirable, because even if totalitarian policing of pandemic lockdown were viable, it also seems fair to assume that most of us would prefer not to depend on a heavily policed public health strategy.

The same kind of trust is necessary for many kinds of policy, particularly where that policy requires citizens to comply with rules that come at significant cost, and coercion alone would be ineffective. But what is distinctive about our pandemic policies is that they depend not just on public trust in policy, but public trust in the science that we are told informs that policy.

When governments follow the science, their response to the pandemic requires public trust in experts, raising questions about how we might develop measures not just to control the spread of the virus, but to maintain public confidence in the scientific recommendations that support these measures. I address some of these questions in this post (I have also addressed these same questions at greater length elsewhere).

My main point in what follows is that when public policy claims to follow the science, citizens are asked not just to believe what they are told by experts, but to follow expert recommendations. And when this is the case, it can be perfectly reasonable for a well-informed citizen to defer to experts on the relevant science, but nonetheless disagree with policy recommendations based on that science. Until we appreciate this, we will struggle to generate public support for science-led policy that is demanded by some of our most urgent political challenges.

Following the science?

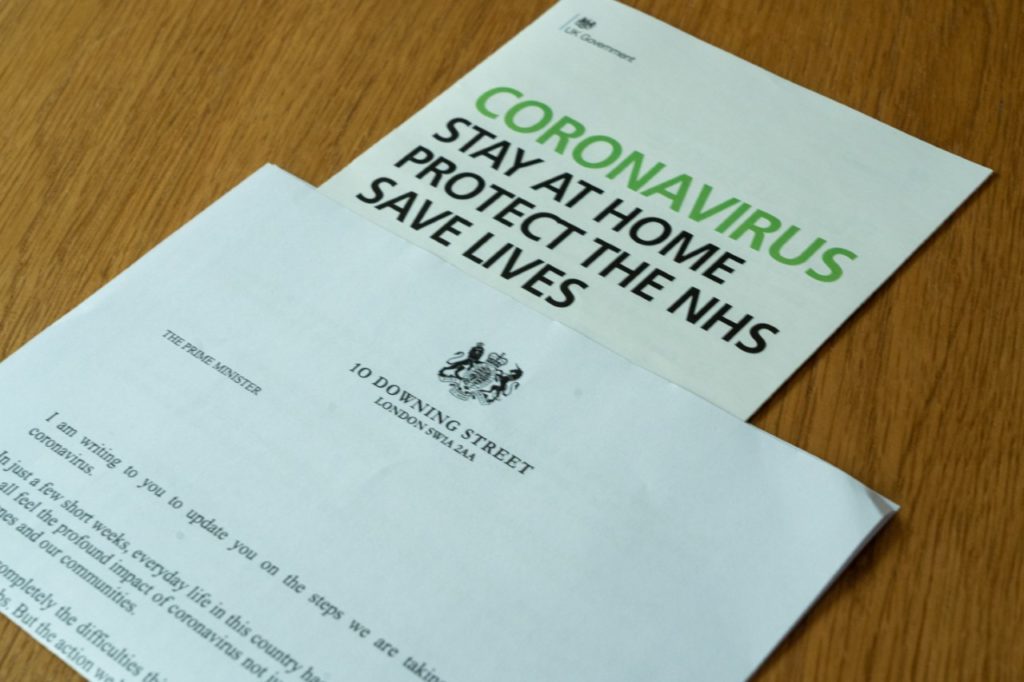

Before I get to questions about the kind of public trust required by science-led policy, we need to first address the extent to which pandemic responses have indeed been led by experts. In the UK, the government’s publicly visible response to the pandemic began with repeated claims that ministers were “following the science”. 10 Downing Street began daily press conferences in March, addresses to journalists and the public in which the Prime Minister was often accompanied by the government’s Chief Medical Officer, Chris Whitty, or Chief Science Officer, Patrick Vallance (sometimes both). In the months that followed Vallance and Whitty played a prominent role in communicating the government’s public health strategy, standing alongside government ministers in many more press conferences and appearing on television, on radio, and in print.

But have ministers in fact followed the science? There are reasons to be sceptical. One thing to consider is whether a reductive referral to “the science” hides a partial or selective perspective informing government decisions. There is of course no one “science” of the pandemic. Different disciplines contribute different kinds of relevant information, and within disciplines experts disagree.

And some have observed that the range of disciplines informing UK government in the early spring was inexplicably narrow. The Scientific Advisory Group for Emergencies (SAGE) evidence cited by government in March included advice from epidemiologists, virologists, and behavioural scientists. But some disciplines were conspicuous in their absence among the government’s experts: economists, sociologists, and psychologists, for example, can provide important insights into the economic and social effects of lockdown that ought to be considered by any genuinely evidence-based policy.

Another reason to be sceptical about the UK government’s claim to follow the science is that several SAGE meetings included Boris Johnson’s infamous Chief Advisor Dominic Cummings, with some members of SAGE stating they were worried about undue influence from Cummings. The problem is not just that “the science” government claimed to follow was incomplete, but also that the government could well have been directing the advice it was claiming to follow.

And perhaps the claim to “follow the science” has been exaggerated. While ministers defer to scientists, those same scientists have been eager to point out that their role is exclusively advisory. Government experts have consistently cleaved to a division of labour that is a cornerstone of so-called “evidence-based policy”: experts provide facts, politicians make decisions.

Nonetheless, it has been clear throughout the UK’s response to the pandemic that government has seen fit to communicate its policy as if it were unequivocally following expert recommendations. Daily 10 Downing St press conferences, running from March to June, began with Boris Johnson at the podium flanked either side by the government’s Chief Medical Officer and Chief Scientific Advisor. The optics of this are not hard to read.

And even in recent weeks, in which the government has stopped its daily press conferences and dialled down the rhetoric of their science-led policy, ministers still claim that they defer to expert advice, despite those same experts repeatedly distancing themselves from government decision making. As recently as the end of July, in announcing the reintroduction of stricter lockdown measures in parts of Greater Manchester, Lancashire, and Yorkshire, Matt Hancock has repeatedly deferred to evidence that a rise in infections in these areas has been caused not by a return to work, or opening pubs, but people visiting each other in their homes.

We are still being asked by the government to trust in recommendations provided by experts, even if the government is not being led by evidence in the way it would have us believe. The communications strategy may not be honest, but it has been consistent, and because the government is inviting the public to think of its policy as science-led, its public health strategy still depends on public trust in science. We are asked to accept that government is following the recommendations of experts, and that we must follow suit.

Believing what we are told

I have said above that public trust in science is both a necessary and desirable feature of an effective public health response to the pandemic. But it is desirable only insofar as it is well placed trust. I presume we don’t want the public to put their faith in just any self-identified expert, regardless of their merits and the level of their expertise. We want the public to trust experts, but only where they have good reason to do so. One important question this raises is what makes trust in experts reasonable, when it is. A second important question is what we can do to ensure that the conditions for reasonable trust in experts are indeed in place.

Philosophers of science and social epistemologists have had a lot to say about when and why it is reasonable to trust experts. The anxiety that many philosophers of epistemic trust respond to is a perceived threat to knowledge about a range of basic facts that most of us don’t have the resources to check for ourselves. Do I know whether the Earth is flat without travelling? Should I believe that penicillin can be used to treat an infection without first studying biochemistry? Though it’s important that we know such things, knowledge of this kind doesn’t meet the same evidence requirements that apply to beliefs about, say, where I left my house keys.

Thankfully, there is an influential way of rescuing knowledge about scientific matters that most of us aren’t able to verify for ourselves. In the 1980s philosopher of science John Hardwig proposed a principle that, if true, rescues the rationality of the beliefs that we hold due to our deference to experts.

Hardwig maintained that if an expert tells me that something is the case this is enough reason for me to believe it too, provided that I have good reason to think that the expert in question has good reason to believe what they tell me. Say that I have a doctor who I see regularly, and I have plenty of evidence to believe that they are competent, well-informed, and sincere. On this basis I have good reason to think that my doctor understands, for example, how to interpret my blood test results, and will not distort the truth when they discuss the results with me. I thus have good reason to think that the doctor has good reason to believe what they tell me about my test results. This is enough, Hardwig maintains, for me to form my own beliefs based on what they tell me, and my epistemic trust has good grounds.

Doing what we are told

But can the same be said when the expert isn’t just asking me to believe something, but is recommending that I do something? Is it still reasonable to trust science when it doesn’t just provide policy-relevant facts, but leads the policy itself?

Consider an elaboration of the doctor example. Say I consult my trusted doctor to discuss the option of a Do Not Attempt CPR (DNACPR) instruction. My doctor is as helpful as always, and provides me with a range of information relevant to the decision. In light of my confidence in the doctor’s professionalism, and if we accept Hardwig’s principle, we can say that I have good reason to believe the information my doctor gives me.

Now consider how I should respond if my doctor were to tell me that, in light of facts about CPR’s success and about my health, I should sign a DNACPR (and set aside the very worrying medical ethics violation involved in a doctor directing a patient in this way regarding life-sustaining treatment). I have good reason to believe the facts they have given me relevant to a DNACPR. Do I also have good reason to follow their advice on whether I should sign? Not necessarily.

For one thing, my doctor’s knowledge regarding the relevant facts might not reliably indicate their ability to reason well about what to do in light of the facts. My doctor might know everything there is to know about the risks, but also be a dangerously impulsive person, or conversely an excessively cautious, risk-averse person.

And even if I think my doctor probably has good reason to think I should sign – I believe they are as wise as they are knowledgeable – their good reason to think I should sign is not thereby a good reason for me. The doctor may have, for instance, some sort of perverse administrative incentive that encourages them to increase the number of signed DNACPRs. Or, more innocently, they may have seen too many patients and families suffer the indignity of a failed CPR attempt at the end of life, or survive CPR only to live for just two or three more days with broken ribs and severe pain. And maybe I have a deeply held conviction in the value of life, or in the purpose of medicine to preserve life at all costs, and I might think this trumps any purported value of a dignified end of life. I may not even agree with the value of dignity at the end of life in the first place.

Well-placed trust in the recommendation of an expert is more demanding than well-placed trust in their factual testimony. A good reason for an expert to believe something factual is thereby a good reason for me to believe it too. But a good reason for an expert to think I should do something is not necessarily a good reason for me to do it. And this is because what I value and what the expert values can diverge without either of us being in any way mistaken about the facts of our situation. I can come to believe everything my doctor tells me about the facts concerning CPR, but still have very good reason to think that I should not do what they are telling me to do.

Something additional is needed for me to have well-placed trust in expert recommendations. When an expert tells me not just what to believe, but what I should do, I need assurance that the expert understands what is in my interest, and that they make recommendations on this basis. An expert might make a recommendation that accords with the values that I happen to have (“want to save the NHS? Wear a face covering in public”) or a recommendation that is in my interest despite my occurrent desires (“smoking is bad for you; stop it).

If I have good reason to think that my doctor, or my plumber, or, indeed, the state epidemiologist, has a good grasp of what is in my interest, and that their recommendations are based on this, then I am in a position to have well-placed trusted in their advice. But without this assurance, I may quite reasonably distrust or disagree with expert recommendations, and not simply out of ignorance or some vague “post-truth” distrust of science in general.

Cultivating trust

This demandingness of well-placed trust in expert recommendations, as opposed to expert information, has ramifications for what we can do to cultivate public trust in (at least purportedly) expert-led policy.

Consider a measure sometimes suggested to increase levels of public trust in science: increased transparency. Transparency can help build confidence in the sincerity of scientists, a crucial requirement for public trust. It can also, of course, help us to see when politics is at greater risk of distorting the science (e.g. Dominic Cummings attending SAGE meetings), and allows us to be more discerning with where we place our trust.

Transparency can also mitigate tendencies to think that anything less than a completely value-free science is invalidated by bias and prejudice. When adjudicating on matters of fact, we can distinguish good from bad use of values in science, depending on whether they play a direct or indirect role in arriving at factual conclusions. Thus we might for instance allow values to determine what we consider an acceptable level of risk of false positives for a coronavirus test, but we would not want values to determine how we interpret the results of an individual instance of the test (“I don’t want to have coronavirus – let’s run the test again”). Transparency can help us be more nuanced in our evaluation of whether a given scientific conclusion has depended on value-judgements in a legitimate way.

But it seems to me that transparency is not so effective when we are being asked to trust in the recommendations of experts. One reason for this is that it is far less easy for us to distinguish good and bad use of values in expert advice. This is because values must always play a direct role in recommendations about what to do. The obstacle to public trust in science-led policy is not, as with public trust in scientific fact, the potential for values to overreach. The challenge is instead to give the public good reason to think that the values that inform expert recommendations align with those to whom they issue advice.

There are more direct means of achieving this than transparency. I will end with two such means, both of which can be understood as ways to democratise expert-led policy.

One helpful measure to show the public that a policy does align with their interest is what is something called expressive overdetermination: investing policy with multiple meanings such that it can be accepted from diverse political perspectives. Reform to French abortion law is sometimes cited as an example of this. After decades of disagreement, France adopted a law that made abortion permissible provided the individual has been granted an unreviewable certification of personal emergency. This new policy was sufficiently polyvalent to be acceptable to the most important parties to the debate; religious conservatives understood the certification to be protecting life, while pro-choice advocates saw the unreviewable nature of the certification as protection for the autonomy of women. The point was to find a way of showing that the same policy can align with the interests of multiple conflicting political groups, rather than to ask groups to either set aside, alter, or compromise on their values.

A second helpful measure, which complements expressive overdetermination, is to recruit spokespersons that are identifiable to diverse groups as similar to them in political outlook. This is sometimes called identity vouching. The strategy is to convince citizens that the relevant scientific advice, and the policy that follows that advice, is likely not to be a threat to their interests because that same consensus is accepted by those with similar values. Barack Obama attempted such a measure when he established links with Evangelical Christians such as Rick Warren, one of the 86 evangelical leaders who had signed the Evangelical Climate Initiative 2 years before the beginning of Obama’s presidency. The move may have had multiple intentions, but one of them is likely to have been an attempt to win over conservative Christians to Obama’s climate-change policy.

Expressive overdetermination and identity vouching are ways of showing the public that a policy is in their interests. Whether they really are successful at building public trust in policy, and more specifically in science-led policy, is a question that needs an empirical answer. What I have tried to show here is that we have good theoretical reasons to think that such additional measures are needed when we are asking the public not just to believe what scientists tell us is the case, but to comply with policy that is led by the best science.

Public trust in science comes in at least two very different forms: believing expert testimony, and following expert recommendations. Efforts to build trust in experts would do well to be sensitive to this difference.

§§§

About Matt Bennett

Matt Bennett is a lecturer with the Faculty of Philosophy at the University of Cambridge. His research and teaching cover topics in ethics (theoretical and applied), political philosophy, and moral psychology, as well as historical study of philosophical work in these areas in the post-Kantian tradition. Much of his research focuses on ethical and political phenomena that are not well understood in narrowly moral terms, and he has written about non-moral forms of trust, agency, and responsibility. From October 2020 Matt will be a postdoctoral researcher with the Leverhulme Competition and Competitiveness project at the University of Essex, where he will study different forms of competition and competitiveness and the role they play in a wide range of social practices and institutions, including markets, the arts, sciences, and sports.